Introduction

Data teams are expected to deliver trusted data quickly for dashboards, reporting, and AI use cases. However, in many organizations, pipelines break often, refresh cycles slip, and data quality issues are found too late. When data becomes unreliable, teams waste time firefighting, and business decisions slow down because people stop trusting the numbers.

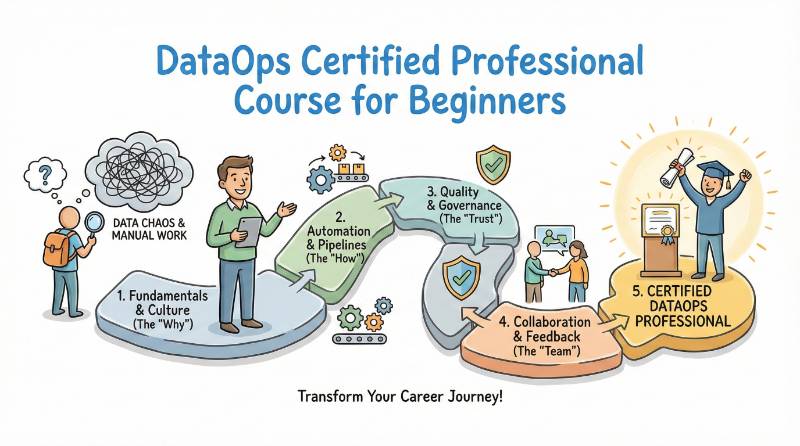

DataOps solves this by applying proven software delivery habits to data work. It focuses on automation, version control, testing, monitoring, and repeatable release practices for data pipelines. The DataOps Certified Professional (DOCP) program is built to help engineers and managers learn these practical methods and apply them in real projects.

In this guide, you will understand what DOCP covers, who should take it, what skills you will gain, how to prepare with clear timelines, and how to choose the next certification based on your role. You will also get learning paths and career-focused FAQs to make your decision simple and confident.

What DOCP is

DataOps Certified Professional (DOCP), is a certification program focused on building and operating reliable data pipelines using DataOps practices. It validates that you can deliver data in a repeatable way with automation, testing, monitoring, and clear operational discipline.

DOCP covers the full lifecycle of data delivery, including ingestion, transformation, orchestration, data quality checks, observability, incident handling, and basic governance habits. The main idea is simple: data should be treated like a production system, so teams can trust the outputs and improve speed without increasing risk.

Why DOCP matters in real jobs

DOCP matters because real data work is about reliability, not one-time pipeline builds. It helps you prevent late refreshes, broken dashboards, and silent data errors by using automation, testing, safe reruns, and monitoring.

It also makes teams faster with less risk. When data changes are versioned, validated, and deployed in a controlled way, production failures reduce and trust in data improves.

- Is the data correct?

- Is it updated on time?

- Can we trust the dashboard?

- Can we find issues early?

- Can we change pipelines safely without breaking reports?

DOCP matters because it teaches you how to solve these daily problems. You learn to catch issues early, recover faster, and reduce repeated failures by adding simple checks and clear operations steps. Over time, your work becomes more predictable, and stakeholders trust your outputs more.

About the provider

The DOCP program is provided by DevOpsSchool . It is known for career-focused learning paths that combine practical skills with structured certification preparation.

The provider’s approach typically focuses on real job readiness, meaning you learn how to apply DataOps practices in real pipelines, not only in theory. This helps working engineers and managers build confidence in automation, quality checks, monitoring, and reliable delivery habits.

Certification roadmap table

You asked for a table with: Track, Level, Who it’s for, Prerequisites, Skills covered, Recommended order.

| Track | Level | Certification | Who it’s for | Prerequisites | Skills covered | Recommended order |

|---|---|---|---|---|---|---|

| DataOps | Professional | DataOps Certified Professional (DOCP) | Data Engineers, Platform teams, SRE, Managers | SQL basics, pipeline basics | DataOps workflow, quality, monitoring, governance basics | 1 |

| DevOps | Professional | DevOps certification track | DevOps, Platform, Cloud engineers | Linux + Git basics | CI/CD, automation, delivery workflow | 2 |

| DevSecOps | Professional | DevSecOps certification track | Security + DevOps/Platform roles | CI/CD awareness | Secure delivery, compliance habits, controls | 3 |

| SRE | Professional | SRE certification track | SRE, Platform reliability roles | Monitoring basics | Reliability, incidents, observability habits | 3 |

| AIOps/MLOps | Professional | AIOps/MLOps certification track | Ops automation + ML platform roles | Monitoring + pipeline basics | Automation + monitoring for ops and ML workflows | 4 |

| FinOps | Practitioner/Professional | FinOps certification track | Cloud owners, Managers, Platform roles | Cloud basics | Cost visibility, guardrails, optimization | 4 |

How to use this table:

Start with DOCP if you work with data pipelines or data platforms. Then add a cross-skill track (DevOps/SRE/Security/FinOps) based on your job needs.

DataOps Certified Professional (DOCP)

What it is

DOCP is a certification that teaches DataOps practices for stable and predictable data delivery. You learn how to build pipelines with automation, quality checks, monitoring, and safe changes so teams can trust the output.

Who should take it

- Data Engineers building ETL/ELT pipelines

- Analytics Engineers managing transformations and models

- Platform/Cloud Engineers supporting data platforms

- SRE/Operations teams supporting pipeline reliability

- Security Engineers working on data access and controls

- Engineering Managers who want predictable delivery and fewer incidents

Skills you’ll gain

- Pipeline lifecycle thinking: build, release, run, improve

- Quality checks: schema checks, null checks, duplicates checks, range checks, freshness checks

- Safe changes: versioning mindset, controlled rollout, rollback thinking

- Monitoring and alerting: failures, late runs, freshness issues, unusual volume changes

- Governance basics: ownership, access control thinking, documentation discipline

- Operational habits: runbooks, incident handling, prevention steps

Real-world projects you should be able to do after it

After DOCP, you should be able to handle real work expected in production teams. You should be comfortable building pipelines that run daily, detecting problems early, and protecting dashboards from bad data.

- Build a daily ETL/ELT pipeline that is safe to rerun

- Add automated quality checks that stop bad data early

- Set up alerts for failures, late runs, and freshness issues

- Create a simple runbook to troubleshoot common pipeline problems

- Plan safe backfills without breaking downstream dashboards

- Document dataset ownership and basic usage rules

Preparation plan (7–14 days / 30 days / 60 days)

This plan is designed for working professionals. Choose the timeline based on your current experience and daily time.

7–14 days (Fast revision)

Best if you already work with data pipelines and need quick revision.

- Learn DataOps basics and common pipeline failure reasons

- Revise the most important quality checks

- Review monitoring signals: freshness, failures, latency

- Practice safe change steps and rerun/backfill thinking

- Make short notes and practice explaining workflows clearly

30 days (Balanced plan)

Best for most working engineers and managers.

- Week 1: DataOps basics + pipeline lifecycle

- Week 2: Automation + safe change process

- Week 3: Quality checks + basic governance habits

- Week 4: Monitoring + runbooks + incident practice

Outcome: one complete mini project (pipeline + checks + alerts + documentation).

60 days (Deep learning + portfolio)

Best if you want strong confidence and a portfolio-ready project.

- Build deeper understanding of reliability and scaling patterns

- Add stronger testing coverage and release discipline

- Practice incident handling and prevention habits

- Strengthen governance: ownership, access, documentation

- Build a capstone pipeline project and present it clearly

Outcome: a portfolio project that shows real production readiness, not only scripts.

Common mistakes

- Treating DataOps as only tools, not workflow

- No clear rules for “correct data”

- Monitoring infrastructure but not data freshness and output validity

- Fixing the same issue manually again and again

- No safe plan for reruns and backfills

- Missing documentation, ownership, and runbooks

- Making changes directly in production without safe steps

Best next certification after this

A strong next step depends on your career goal:

- If reliability is your focus, move toward SRE and observability habits

- If security and compliance matter, move toward secure delivery and governance

- If you want platform ownership, strengthen DevOps and cloud platform skills

- If cost is a big pain, add FinOps and cost controls

Choose your path (6 learning paths)

DevOps path

Choose this if you want stronger automation and delivery workflow habits.

You focus on repeatable releases, CI/CD thinking, and environment stability.

This helps you run pipelines with less manual work and fewer surprises.

DevSecOps path

Choose this if security and compliance are important in your data platform.

You focus on access control thinking, audit readiness, and safe delivery habits.

This helps you reduce risk and avoid last-minute security blockers.

SRE path

Choose this if reliability is your main responsibility.

You focus on monitoring, incident response, and prevention habits.

This helps you reduce pipeline downtime and speed up recovery.

AIOps/MLOps path

Choose this if you support ML systems or large automation workflows.

You focus on stable inputs, monitoring signals, and operational readiness.

This helps prevent model issues caused by bad or late data.

DataOps path

Choose this if you want end-to-end ownership of data delivery.

You focus on orchestration, quality checks, governance basics, and observability.

This builds a strong “production data engineer” profile.

FinOps path

Choose this if cost control and efficiency matter.

You focus on cost visibility, guardrails, and optimization habits.

This helps reduce cloud waste and improve spending control.

Role → Recommended certifications mapping

| Role | Recommended direction | Why it fits |

|---|---|---|

| DevOps Engineer | DevOps basics → DOCP | Applies delivery discipline to data pipelines |

| SRE | Reliability + observability habits → DOCP | Makes pipelines stable and incident-ready |

| Platform Engineer | Platform/orchestration → DOCP | Data platforms need repeatable workflows |

| Cloud Engineer | Cloud basics + security basics → DOCP | Data workloads are cloud-heavy and change often |

| Security Engineer | Secure delivery + governance mindset → DOCP | Helps with access controls and audit readiness |

| Data Engineer | DOCP first | Direct match for pipeline reliability and quality |

| FinOps Practitioner | Cost visibility + controls → FinOps direction | Helps reduce waste and add guardrails |

| Engineering Manager | Delivery metrics + governance + DOCP overview | Improves predictability and reduces incidents |

Comparison table (DOCP vs related tracks)

| Area | DOCP | DevOps | DevSecOps | SRE | AIOps/MLOps | FinOps |

|---|---|---|---|---|---|---|

| Main goal | Trusted data delivery | Faster software delivery | Secure delivery | Reliable systems | Stable ops + ML operations | Cost control |

| Main focus | Quality + monitoring + safe data changes | CI/CD + automation | Security checks + compliance | SLOs + incidents + observability | Monitoring + automation for ops/ML | Cost visibility + guardrails |

| Best for roles | Data/Platform/SRE/Managers | DevOps/Platform/Cloud | Security + Platform | SRE/Platform | ML + ops teams | Cloud owners + managers |

| Top problems solved | Wrong numbers, late data, broken dashboards | Slow releases, manual work | Risk, audit delays | Outages, slow recovery | Model/data instability | High cloud spend |

Next certifications to take

Same track (Deeper DataOps)

Choose this if you want to become a senior DataOps or data platform specialist.

Focus on deeper quality engineering, stronger governance, and advanced reliability patterns.

Cross-track (Broader engineering growth)

Choose this if you want Platform Engineer or Cloud Data Engineer growth.

Add DevOps and SRE habits for automation, monitoring, and scale.

Leadership (Lead / Manager / Architect)

Choose this if you want to lead teams and platforms.

Focus on governance programs, delivery metrics, standard runbooks, and predictable delivery.

Institutions that provide help in Training cum Certifications for DOCP

DevOpsSchool

DevOpsSchool provides structured learning across multiple engineering tracks. It suits working professionals who want a clear roadmap and practical outcomes. DOCP preparation benefits from a job-focused approach and track-based learning.

Cotocus

Cotocus can be helpful if you want learning that connects strongly with real project delivery. It suits professionals who want implementation thinking and practical guidance. It also fits teams improving reliability and process.

Scmgalaxy

Scmgalaxy supports step-by-step learning and structured practice. It suits working professionals who prefer clear explanations and examples. This can help build confidence for real project work.

BestDevOps

BestDevOps is useful for learners who want simple explanations and a practical learning flow. It suits people learning alongside a full-time job. It supports job-ready understanding without heavy theory.

devsecopsschool.com

This is relevant if your DataOps work includes strong security and compliance needs. It supports secure delivery thinking and audit readiness habits. This is useful in regulated environments.

sreschool.com

This is useful if reliability and incident readiness are important. It supports monitoring and operational discipline that improves stability. These habits help reduce pipeline downtime.

aiopsschool.com

This is helpful when operations are large and automation becomes important. It supports learning around monitoring signals and response workflows. It can be useful in high-alert environments.

dataopsschool.com

This is aligned with DataOps learning focus like orchestration thinking, quality checks, and observability habits. It is useful for learners who want DataOps-only clarity. It supports stable delivery habits.

finopsschool.com

This is relevant when cost and efficiency matter for cloud and data platforms. It supports thinking around cost visibility and governance controls. It helps when teams need cost accountability.

General FAQs

1) Is DOCP difficult?

DOCP is practical. If you already work on pipelines, it feels easier. If you are new to monitoring and failures, it takes more practice.

2) How much time do I need to prepare?

A quick revision plan can take 7–14 days. A balanced plan is 30 days. A deep, portfolio-focused plan is 60 days.

3) What prerequisites do I need?

Basic SQL and basic pipeline understanding are enough. Simple scripting and clear thinking help more than advanced theory.

4) Is DOCP only for Data Engineers?

No. Platform engineers, DevOps engineers, SRE teams, and managers also benefit because DataOps is about reliable delivery.

5) What is the biggest benefit in daily work?

Fewer repeated failures and faster recovery. You learn how to detect issues early and prevent them from repeating.

6) Will DOCP help me in interviews?

Yes. You can explain real skills like quality checks, monitoring, runbooks, and safe changes.

7) What projects should I build after DOCP?

Build one end-to-end pipeline with quality checks, alerts, and a runbook. This shows real production readiness.

8) Is DOCP useful even if my company uses modern data tools?

Yes. Tools do not solve weak workflows. DOCP teaches habits that make tools work better.

9) Should I do DevOps first or DOCP first?

If you already work in data, DOCP can be first. If you are new to delivery workflows, DevOps basics help first.

10) Does DOCP help managers?

Yes. It helps managers improve predictability, reduce incidents, and build clear ownership and process.

11) What career roles does DOCP support?

DataOps Engineer, Data Platform Engineer, reliability-focused Data Engineer, and analytics platform roles.

12) What should I focus on most while preparing?

Quality checks, monitoring, safe changes, reruns/backfills, and simple documentation.

FAQs on DataOps Certified Professional (DOCP)

1) What is DOCP in one line?

DOCP teaches you to deliver data pipelines with automation, checks, monitoring, and safe changes.

2) Who should take DOCP?

Data Engineers, Analytics Engineers, Platform/Cloud Engineers, SRE teams supporting data platforms, and managers who need stable data.

3) Is DOCP more about tools or process?

More about process and habits. Tools help, but workflow discipline is the real value.

4) What are the top skills DOCP builds?

Quality checks, monitoring, safe reruns/backfills, documentation discipline, and reliable delivery thinking.

5) How long does DOCP preparation take?

7–14 days (revision), 30 days (balanced), 60 days (deep + portfolio).

6) Do I need strong coding skills?

Basic scripting is enough. DOCP is about stable workflows, not complex coding.

7) What can I do after completing DOCP?

Build pipelines with automated checks, set alerts for late/failing jobs, manage safe reruns, and document ownership and fixes.

8) What should I do after DOCP?

Pick one direction: deeper DataOps, cross-track reliability skills, or leadership and governance focus.

Conclusion

DOCP is valuable because it trains you to deliver data the way mature teams deliver software: repeatable, testable, monitored, and owned. Instead of relying on manual checks and last-minute fixes, you learn how to build pipelines that can run daily with predictable outcomes and clear recovery steps.

If your work involves data pipelines, analytics delivery, or supporting AI and reporting systems, DOCP helps you reduce failures, improve trust, and speed up delivery without increasing risk. The best results come when you complete at least one end-to-end project with automated quality gates, safe reruns, and freshness monitoring, because that is what real jobs demand.